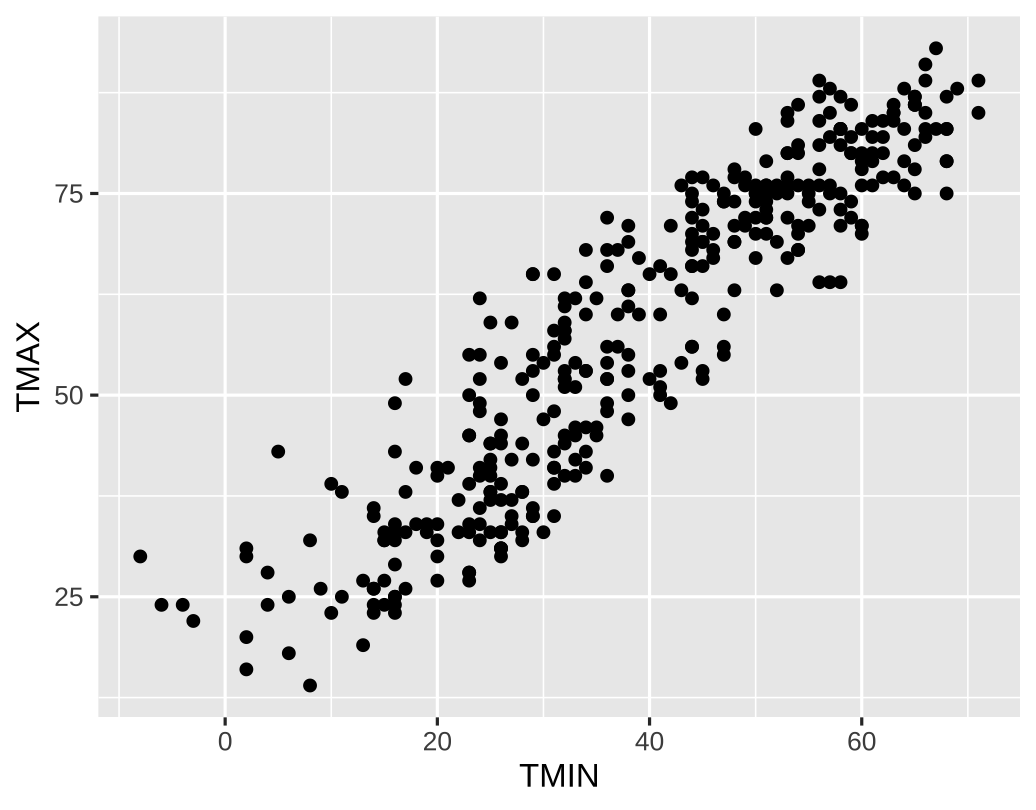

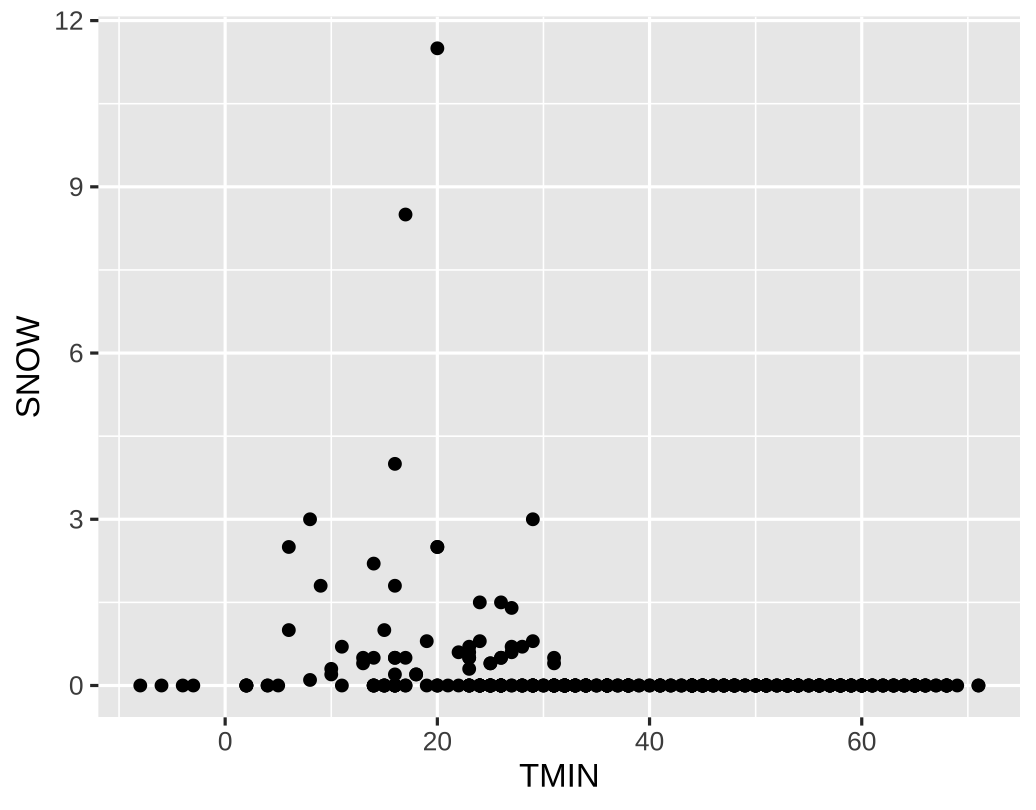

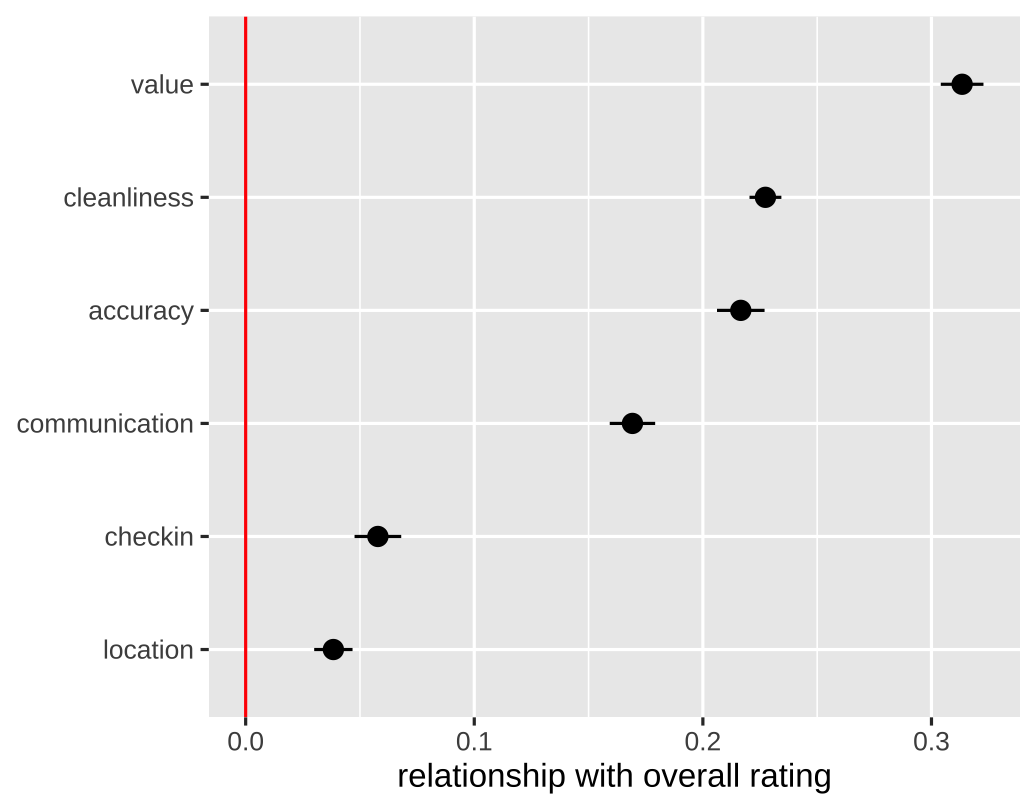

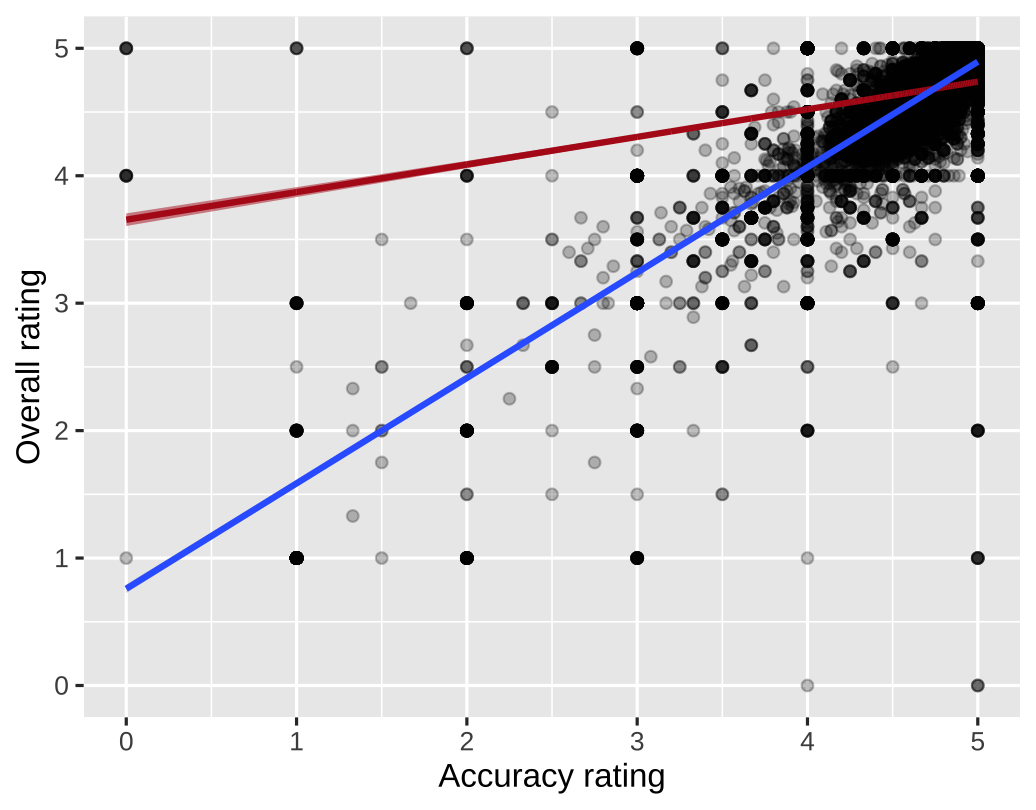

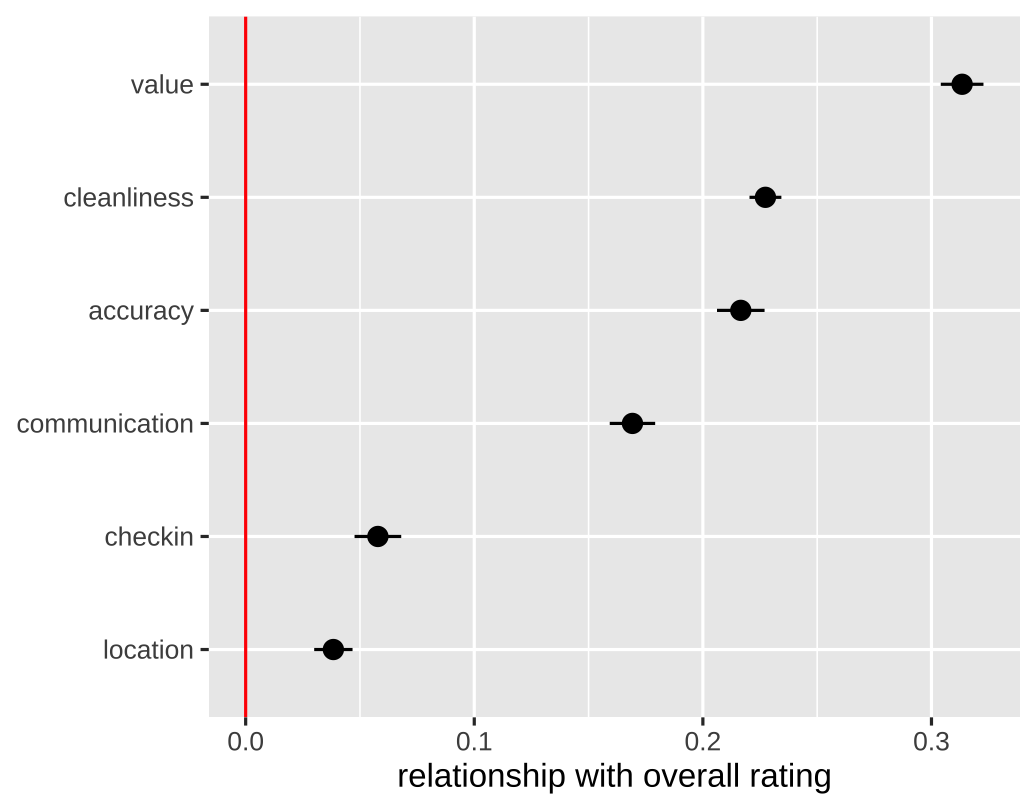

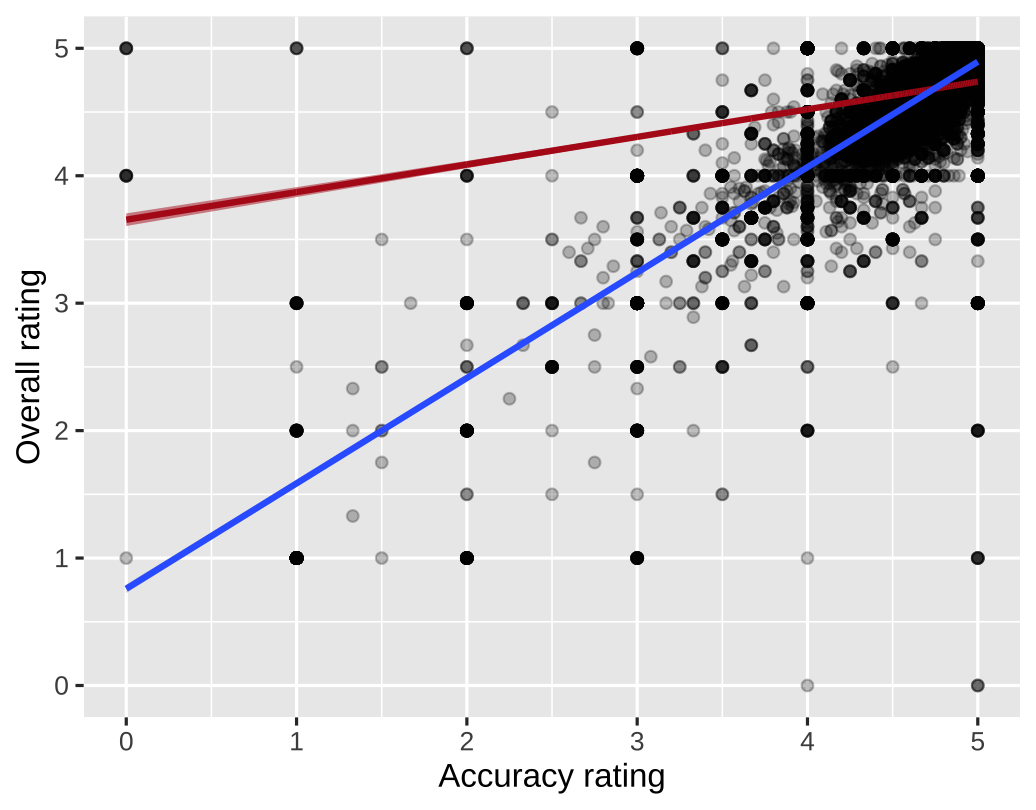

class: center middle main-title section-title-4 # Relationships .class-info[ **Week 10** AEM 2850 / 5850 : R for Business Analytics<br> Cornell Dyson<br> Fall 2025 Acknowledgements: [Andrew Heiss](https://datavizm20.classes.andrewheiss.com) <!-- [Claus Wilke](https://wilkelab.org/SDS375/) --> <!-- [Grant McDermott](https://github.com/uo-ec607/lectures), --> <!-- [Jenny Bryan](https://stat545.com/join-cheatsheet.html), --> <!-- [Allison Horst](https://github.com/allisonhorst/stats-illustrations) --> ] --- # Announcements **Homework - Week 9** was due last night - We posted it on posit cloud and gradescope, but not canvas - So I have extended the deadline through this Friday, Oct 31 **Homework - Week 10** will not exist, to give time for the group project **Homework - Week 11** will be done in class, to give time for the group project **Group projects** are due Friday, November 14 - Make a plan and start early! -- Questions before we get started? --- # Plan for this week .pull-left[ ### Tuesday [Prologue: The dangers of dual y-axes](#dual-y-axes) [Visualizing relationships between a numerical and a categorical variable](#correlations-categorical) [Visualizing correlations](#correlations) [example-10-1](#example-10-1) ] .pull-right[ ### Thursday [Visualizing regressions](#regressions) [example-10-2](#example-10-2) ] --- class: inverse, center, middle name: dual-y-axes # Prologue: The dangers of dual y-axes --- # Oh no! .small.center[ <figure style="padding-top: 5px;"> <img src="img/10/spurious-correlation.svg" alt="Spurious correlation between Mount Everest climbs and hotdog consumption" title="Spurious correlation between Mount Everest climbs and hotdog consumption" width="100%"> <figcaption>Source: <a href="https://www.tylervigen.com/spurious-correlations" target="_blank">Tyler Vigen's spurious correlations</a></figcaption> </figure> ] ??? Source: https://www.tylervigen.com/spurious/correlation/1159_total-number-of-successful-mount-everest-climbs_correlates-with_hotdogs-consumed-by-nathans-hot-dog-eating-competition-champion --- # GPT 3.5 and DALL·E 3 explainer .pull-left[ "As the number of successful Mount Everest climbs rises, so does the peak appetite for adventure. This, in turn, creates a sausage-yetis-faction where competitors are relishing the thrill of the challenge like never before, and they're on a roll to claim the title. It's a summit showdown of epic proportions, where each contender is truly reaching their peak performance..." ] .pull-right[ <figure> <img src="img/10/spurious-correlation-ai.jpg" alt="Mount Everest hot dog eating competition image generated by dalle-3" title="Mount Everest hot dog eating competition" width="100%"> </figure> ] --- # Why not use two y-axes? -- You have to choose where the y-axes start and stop, which means... -- ...you can force the two trends to line up however you want. --- # It even happens in *The Economist*! .center[ <figure> <img src="img/10/economist-dogs.png" alt="Dog neck size and weight in The Economist" title="Dog neck size and weight in The Economist" width="85%"> </figure> The revised axes ranges reflect a comparable proportional change ] ??? <https://medium.economist.com/mistakes-weve-drawn-a-few-8cdd8a42d368> --- # What could we do instead? -- .pull-left[ **Use multiple plots (e.g., facets)** <img src="10-slides_files/figure-html/solar-data-facet-1.png" width="100%" style="display: block; margin: auto;" /> ] -- .pull-right[ **Use scatter plots** <img src="10-slides_files/figure-html/solar-data-scatter-1.png" width="100%" style="display: block; margin: auto;" /> ] --- # When are dual y-axes defensible? -- When the two axes measure the same thing (e.g., indexing, conversion, etc.) -- <img src="10-slides_files/figure-html/ithaca-weather-dual-nice-1.png" width="100%" style="display: block; margin: auto;" /> --- class: inverse, center, middle name: correlations-categorical # Visualizing relationships between<br>a numerical and a categorical variable --- # We already did this! When? -- .pull-left[ <img src="10-slides_files/figure-html/listings-facet-1.png" width="100%" style="display: block; margin: auto;" /> ] .pull-right[ <img src="10-slides_files/figure-html/listings-ridgeline-1.png" width="100%" style="display: block; margin: auto;" /> ] --- class: inverse, center, middle name: correlations-numerical # Visualizing relationships between<br>two numerical variables --- class: inverse, center, middle name: correlations # Visualizing correlations --- # What does "correlation" mean to you? -- As the value of X goes up, Y is very / a little / not at all likely to go up (down) $$ \rho_{X, Y} = \frac{\operatorname{cov}(X, Y)}{\sigma_X \sigma_Y} $$ Says nothing about *how much* Y changes when X changes --- # Correlation values .pull-left[ <table> <tr> <th class="cell-left">$$\rho$$</th> <th class="cell-left">Rough meaning</th> </tr> <tr> <td class="cell-left">±0.1–0.3 </td> <td class="cell-left">Weak</td> </tr> <tr> <td class="cell-left">±0.3–0.5</td> <td class="cell-left">Moderate</td> </tr> <tr> <td class="cell-left">±0.5–0.8</td> <td class="cell-left">Strong</td> </tr> <tr> <td class="cell-left">±0.8–0.9</td> <td class="cell-left">Very strong</td> </tr> </table> ] .pull-right[ <img src="10-slides_files/figure-html/correlation-grid-1.png" width="100%" style="display: block; margin: auto;" /> ] --- # Scatter plots The humble scatter plot is often the best place to start when studying the association between two variables -- **Example:** max and min temperature in Ithaca each day of the year - Do you think they are highly correlated, somewhat correlated, or not at all correlated? - What sign do you think this correlation has? - How would you make a scatter plot of these data in R? --- # Scatter plots .left-code[ ``` r ithaca_weather |> ggplot(aes(x = TMIN, y = TMAX)) + geom_point() ``` ``` r ithaca_weather |> * summarize(cor(TMIN, TMAX)) ``` ``` ## # A tibble: 1 × 1 ## `cor(TMIN, TMAX)` ## <dbl> ## 1 0.919 ``` **Strong positive correlation** ] .right-plot[  ] --- # What about min temp and snowfall? -- .left-code[ ``` r ithaca_weather |> ggplot(aes(x = TMIN, y = SNOW)) + geom_point() ``` ``` r ithaca_weather |> * summarize(cor(TMIN, SNOW)) ``` ``` ## # A tibble: 1 × 1 ## `cor(TMIN, SNOW)` ## <dbl> ## 1 -0.239 ``` **Weak negative correlation** ] .right-plot[  ] --- class: inverse, center, middle name: example-10-1 # example-10-1:<br>relationships-practice.R --- class: inverse, center, middle name: regressions # Visualizing regressions --- # Linear regression reminder $$ y = \beta_0 + \beta_1 x_1 + \varepsilon $$ <table> <tr> <!-- <td class="cell-center">\(y\)</td> --> <td class="cell-center">\(y\)</td> <td class="cell-left"> Outcome variable (DV)</td> </tr> <tr> <!-- <td class="cell-center">\(x\)</td> --> <td class="cell-center">\(x_1\)</td> <td class="cell-left"> Explanatory variable (IV)</td> </tr> <tr> <!-- <td class="cell-center">\(a\)</td> --> <td class="cell-center">\(\beta_1\)</td> <td class="cell-left"> Slope</td> </tr> <tr> <!-- <td class="cell-center">\(b\)</td> --> <td class="cell-center">\(\beta_0\)</td> <td class="cell-left"> y-intercept</td> </tr> <tr> <!-- <td class="cell-center">  </td> --> <td class="cell-center"> \(\varepsilon\) </td> <td class="cell-left"> Error (residuals)</td> </tr> </table> --- # Linear regression is just drawing lines .pull-left[ <img src="10-slides_files/figure-html/review-line-1.png" width="100%" style="display: block; margin: auto;" /> ] -- .pull-right[ <img src="10-slides_files/figure-html/review-residuals-1.png" width="100%" style="display: block; margin: auto;" /> ] --- # Building models in R Base R has some basic modeling tools: ``` r <MODEL> <- lm(<Y> ~ <X>, data = <DATA>) # use lm to fit simple linear models summary(<MODEL>) # see model details ``` -- The `broom` package provides helpful tools for tidying model output: ``` r library(broom) # convert model estimates to a data frame for plotting tidy(<MODEL>) ``` --- # Modeling Airbnb reviews Let's use some real-world data to explore linear regression Put yourself in the shoes of an Airbnb host trying to decide how much to invest in improvements across these categories: .center[ <figure> <img src="img/10/airbnb-reviews.png" alt="Airbnb reviews" title="Airbnb reviews" width="100%"> </figure> ] Let's see how well "accuracy" reviews predict an Airbnb's overall rating --- # Modeling Airbnb reviews .pull-left[ $$ \text{rating} = \beta_0 + \beta_1 \text{accuracy} + \varepsilon $$ ``` r review_model <- lm( rating ~ accuracy, data = reviews ) ``` Note how we didn't write anything for the `\(\beta_0\)` or `\(\varepsilon\)` terms What do you think the sign on `\(\beta_1\)` is? How large do you think `\(\beta_1\)` is? ] -- .pull-right[ ``` r review_model ``` ``` ## *## Call: *## lm(formula = rating ~ accuracy, data = reviews) ## *## Coefficients: *## (Intercept) accuracy *## 0.7590 0.8271 ``` ] --- # Modeling Airbnb reviews ``` r summary(review_model) ``` ``` ## *## Call: *## lm(formula = rating ~ accuracy, data = reviews) ## ## Residuals: ## Min 1Q Median 3Q Max ## -4.8943 -0.0648 0.0608 0.1057 4.2410 ## *## Coefficients: *## Estimate Std. Error t value Pr(>|t|) *## (Intercept) 0.758952 0.017156 44.24 <2e-16 *** *## accuracy 0.827067 0.003597 229.94 <2e-16 *** ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ## ## Residual standard error: 0.2996 on 28159 degrees of freedom ## (10116 observations deleted due to missingness) ## Multiple R-squared: 0.6525, Adjusted R-squared: 0.6525 ## F-statistic: 5.287e+04 on 1 and 28159 DF, p-value: < 2.2e-16 ``` --- # Modeling Airbnb reviews ``` r tidy(review_model, conf.int = TRUE) ``` ``` ## # A tibble: 2 × 7 ## term estimate std.error statistic p.value conf.low conf.high ## <chr> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> ## 1 (Intercept) 0.759 0.0172 44.2 0 0.725 0.793 ## 2 accuracy 0.827 0.00360 230. 0 0.820 0.834 ``` <!-- -- --> --- # Interpretation for a continuous variable $$ y = \beta_0 + \beta_1 x_1 + \varepsilon $$ -- On average, a one unit increase in `\(x_1\)` is *associated* with a `\(\beta_1\)` change in `\(y\)` -- $$ \text{rating} = \beta_0 + \beta_1 \text{accuracy} + \varepsilon $$ $$ \widehat{\text{rating}} = 0.76 + 0.83 \times \text{accuracy} $$ On average, a one unit increase in accuracy rating is associated with 0.83 higher overall rating -- **This is easy to visualize: it's a line!** --- # Visualization of a continuous variable .pull-left[ ``` r tidy(review_model) |> select(term, estimate) ``` ``` ## # A tibble: 2 × 2 ## term estimate ## <chr> <dbl> ## 1 (Intercept) 0.759 ## 2 accuracy 0.827 ``` $$ \widehat{\text{rating}} = 0.76 + 0.83 \times \text{accuracy} $$ Note: this is an example where `alpha` helps with overplotting ] .pull-right[ <img src="10-slides_files/figure-html/review-line-again-1.png" width="100%" style="display: block; margin: auto;" /> ] --- # Visualization of a continuous variable .pull-left[ ``` r tidy(review_model) |> select(term, estimate) ``` ``` ## # A tibble: 2 × 2 ## term estimate ## <chr> <dbl> ## 1 (Intercept) 0.759 ## 2 accuracy 0.827 ``` $$ \widehat{\text{rating}} = 0.76 + 0.83 \times \text{accuracy} $$ Note: this is an example where `alpha` helps with overplotting ] .pull-right[ <img src="10-slides_files/figure-html/review-line-again-again-1.png" width="100%" style="display: block; margin: auto;" /> ] --- # Visualization of a continuous variable .pull-left[ .small[Recall: `geom_smooth(method = "lm")` allows us to skip the estimation step!] ``` r reviews |> ggplot(aes(x = accuracy, y = rating)) + geom_point(alpha = 0.25) + * geom_smooth( * method = "lm", # smoothing function * se = FALSE # omit confidence bands * ) ``` ] .pull-right[ <img src="10-slides_files/figure-html/tidy-review-model-geom_smooth-plot-1.png" width="100%" style="display: block; margin: auto;" /> ] --- # Multiple regression We're not limited to just one explanatory variable! -- $$ y = \beta_0 + \beta_1 x_1 + \beta_2 x_2 + \cdots + \beta_n x_n + \varepsilon $$ <br> -- ``` r review_model_big <- lm(rating ~ accuracy + cleanliness + communication + location + checkin + value, data = reviews) ``` $$ `\begin{aligned} \widehat{\text{rating}} =& \widehat{\beta}_0 + \widehat{\beta}_1 \text{accuracy} + \widehat{\beta}_2 \text{cleanliness} + \\ &\widehat{\beta}_3 \text{communication} + \widehat{\beta}_4 \text{location} + \\ &\widehat{\beta}_5 \text{checkin} + \widehat{\beta}_6 \text{value} \end{aligned}` $$ --- # Multiple regression We started by estimating this **univariate** (aka **bivariate**) regression model: $$ \text{rating} = \beta_0 + \beta_1 \text{accuracy} + \varepsilon $$ -- Now we are estimating this **multivariate** regression model: $$ `\begin{aligned} \text{rating} =& \beta_0 + \beta_1 \text{accuracy} + \beta_2 \text{cleanliness} + \\ &\beta_3 \text{communication} + \beta_4 \text{location} + \\ &\beta_5 \text{checkin} + \beta_6 \text{value} + \varepsilon \end{aligned}` $$ -- Why are we doing this? Wasn't it complicated enough already?! -- We want to use these data to inform our Airbnb hosting strategy. What are the pros and cons of the two models for this purpose? --- # Multiple regression Will the coefficient on `accuracy` will be smaller, larger, or the same? Why? -- .small-code[ ``` r tidy(review_model_big, conf.int = TRUE) ``` ``` ## # A tibble: 7 × 7 ## term estimate std.error statistic p.value conf.low conf.high ## <chr> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> ## 1 (Intercept) -0.124 0.0178 -6.96 3.43e- 12 -0.159 -0.0892 *## 2 accuracy 0.217 0.00531 40.8 0 0.206 0.227 ## 3 cleanliness 0.227 0.00356 63.9 0 0.220 0.234 ## 4 communication 0.169 0.00507 33.4 1.45e-239 0.159 0.179 ## 5 location 0.0384 0.00428 8.97 3.25e- 19 0.0300 0.0468 ## 6 checkin 0.0578 0.00521 11.1 1.37e- 28 0.0476 0.0680 ## 7 value 0.313 0.00476 65.8 0 0.304 0.323 ``` ] -- $$ `\begin{aligned} \widehat{\text{rating}} =& -0.12 + 0.22 \times \text{accuracy} + 0.23 \times \text{cleanliness} + \\ &0.17 \times \text{communication} + 0.04 \times \text{location} + \\ &0.06 \times \text{checkin} + 0.31 \times \text{value} \end{aligned}` $$ --- # Interpretation for continuous variables $$ y = \beta_0 + \beta_1 x_1 + \beta_2 x_2 + \cdots + \beta_n x_n + \varepsilon $$ -- ***Holding everything else constant***, a one unit increase in `\(x_n\)` is *associated* with a `\(\beta_n\)` change in `\(y\)`, on average -- $$ `\begin{aligned} \widehat{\text{rating}} =& -0.12 + 0.22 \times \text{accuracy} + 0.23 \times \text{cleanliness} + \\ &0.17 \times \text{communication} + 0.04 \times \text{location} + \\ &0.06 \times \text{checkin} + 0.31 \times \text{value} \end{aligned}` $$ On average, a one unit increase in accuracy rating is associated with 0.22 higher overall rating, holding everything else constant -- .tiny[ For the earlier model we had said > On average, a one unit increase in accuracy rating is associated with 0.83 higher overall rating ] --- # Good luck visualizing all this! .large[You can't just draw a single line! There are too many moving parts!] --- # Main challenges -- Each coefficient has its own estimate and standard errors -- **Solution:** Plot the coefficients and their errors with a *coefficient plot* -- The results change as you move sliders (continuous variables) up and down or flip switches (categorical variables) on and off -- **Solution:** Plot the *marginal effects* for the coefficients you're interested in --- # Coefficient plots Convert the model results to a data frame with `tidy()` .small-code[ ``` r # tidy the estimates (reformatting names is not required) review_coefs <- tidy( review_model_big, # get the model's coefficients conf.int = TRUE # include confidence intervals ) |> filter(term!="(Intercept)") review_coefs ``` ``` ## # A tibble: 6 × 7 ## term estimate std.error statistic p.value conf.low conf.high ## <chr> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> ## 1 accuracy 0.217 0.00531 40.8 0 0.206 0.227 ## 2 cleanliness 0.227 0.00356 63.9 0 0.220 0.234 ## 3 communication 0.169 0.00507 33.4 1.45e-239 0.159 0.179 ## 4 location 0.0384 0.00428 8.97 3.25e- 19 0.0300 0.0468 ## 5 checkin 0.0578 0.00521 11.1 1.37e- 28 0.0476 0.0680 ## 6 value 0.313 0.00476 65.8 0 0.304 0.323 ``` ] --- # Coefficient plots Plot the point estimate and confidence intervals with `geom_pointrange()` .left-code[ ``` r review_coefs |> ggplot(aes(x = estimate, y = fct_reorder(term, estimate))) + * geom_pointrange(aes(xmin = conf.low, * xmax = conf.high)) + * geom_vline(xintercept = 0, color = "red") + labs(x = "relationship with overall rating", y = NULL) ``` What do you take away from this? Should this inform where you decide to focus your investment as a host? ] .right-plot[  ] --- # Marginal effects plots **Remember we interpret individual coefficients while holding others constant** We move one slider while leaving all the other sliders and switches alone -- **The same principle applies to visualizing a variable's effect** -- Plug a bunch of values into the model and find the predicted outcome -- Plot the values and predicted outcome -- *We will not cover the process of creating marginal effects plots due to time constraints* --- # Marginal effects plots How do the multivariate and univariate regression lines compare? -- .pull-left[  ] .pull-right[ <span style="color:#B31B1B; font-weight:bold">Red line:</span> multivariate <span style="color:#3366FF; font-weight:bold">Blue line:</span> univariate What do you take away from this? Should this affect how much you invest in accuracy? ] --- # Stepping back Which of these plots would be more useful to Airbnb hosts? Why? .pull-left[  ] .pull-right[  ] --- # Not just OLS! The same techniques work for pretty much any statistical model R can run -- - OLS with high-dimensional fixed effects - Logistic, probit, and multinomial regression (ordered and unordered) - Multilevel (i.e., mixed and random effects) regression - Bayesian models - Machine learning models -- If it has coefficients and/or makes predictions, you can (and should) visualize it! --- class: inverse, center, middle name: example-10-2 # example-10-2:<br>regression-practice.R